There are a near-infinite number of ways to build up a WordPress site. The process can all typically be broken into 3 categories in most cases. Delivery, Processing, and Storage.

Delivery

The first (or last) step in processing a request for a WordPress is to look at how it is delivered. This covers anything that handles returning data to the visitor. Services cover topics like Apache, Nginx, or Varnish. CDN covers either a reverse proxy service like Cloudflare or a traditional CDN like Cloudfront. Last, Plugins cover anything WordPress side to assist with delivery, such as Varnish/Cloudflare plugin or a Caching plugin like SuperCache or W3 Total Cache.

Most options here will provide similar performance and offer some trade-offs when configured. For example, Cloudflare is a great replacement for a CDN service and a Cache service like Varnish. It is however outside the direct control of your network and can cause issues when developing a site such as in regards to caching CSS or JS files you are modifying. If you run a Varnish cluster directly, you need to take into account the total network load required for static files or inbound attacks. A cluster may also require certain features such as network load balancing or IP failover options at the datacenter option which for some is simply not available.

The simplest solution for small WordPress sits is to simply use an Nginx or Apache service to handle all requests and put the domain behind Cloudflare. This provides some level of network protection and reduces complexity.

Processing

The possibly most important process in this is the actual processing of requests, aka the actual WordPress process and the PHP process running it. In terms of WordPress, the best steps to take here is to ensure that the WordPress is up to date and that there isn’t too much “junk” installed. Most issues I see with WordPress sites are at this level with some “Slow” WordPress sites running over 200 plugins. The more critical area to look at is PHP as there are a number of ways to optimize or ensure it is running smoothly.

When looking at PHP we want to look at 2 details: How PHP is running and its version. PHP is up to date and I will typically recommend ensuring that PHP is running as FCGI or FPM style process. There are other ways to run PHP that are more typically used with Apache: suPHP, ruid2, and CGI. Each one has its benefits and its pitfalls. suPHP and ruid2 is useful in shared environments or environments with a large number of websites that need to run as their own user however they add a cost-overhead including the need for a process to manually startup. These also do not benefit from memory-based caching solutions such as OpCache. FCGI or FPM both operate as a “pool” based system, process are already “running” or in memory and can make use of shared memory more readily. These, however, are not ideal for shared environments or environments with a large number of separate websites as they add a large amount of overhead in terms of RAM and process count requirements on large servers.

Last, when dealing with PHP there are a few other considerations to take into account, primarily PHP modules. The use of an Opcode caching module like OpCache can provide a significant performance boost when used with FCGI or FPM. We also want to ensure modules that are not used are removed. Some server setups by default include every common PHP module enabled by default to ensure any typical website can run. Disabling any unneeded modules can add just a hair more performance when trying to squeeze out every last ounce of speed.

Storage

The last stage is the storage of data. There are two main focuses, the actual drive for files and the database setup. The biggest recommendation I can make is to be on a server using an SSD simply because such servers are cheap now and the difference between a spindle based drive and an SSD is immense.

A setup such as a 512GB SSD for your server’s primary data and a 2TB HDD for local backups would be an example of an ideal configuration. Data can also be split up, for example large download files or videos which are not accessed frequently placed on an HDD if space is an issue.

The second key area is the database storage. WordPress by default only supports MySQL or its diritives such as MariaDB or Percona. Any of these will work and when configured correctly should not be an issue. MySQL should be considered the slowest part of the site so ensuring either requests to it are only when absolutely needed. Last, those requests are well optimized with proper keying and indexing in the query and on the tables.

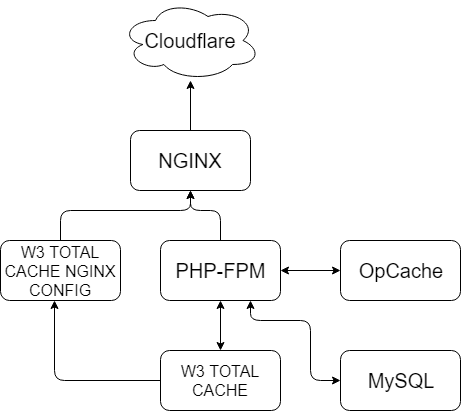

For reference, this site/server uses the following design:

While this may seem complicated, it is quite simple and its primary purpose is to serve requests as quickly as possible with the least redundancy.

Starting from the top I use Cloudflare as a reverse proxy and CDN. This works well as Cloudflare will cache and serve static content such as images or CSS/js files faster than the server can. From there I use Nginx to handle everything server-side. It is tied into the W3 Total Cache includes file so W3 can direct Nginx where pre-generated files are located. If the request can be handled here it stops. If the request cannot be handled such as there is no cache file present it is passed to PHP-FPM. PHP-FPM is configured with OpCache so all of the PHP scripts can be precompiled memory. This reduces disk thrashing as otherwise on a large site, the server may need to read several hundred or thousand files PHP, which, depending on the amount of traffic can significantly reduce page load time.

When the request enters PHP, W3 Total Cache is used to handle and update the Cache directory Nginx checks. If the current request can be cached in the future, we do so. The last step is actually in MySQL. By default on MySQL 5.6 query caching is disabled. While there are benefits to doing so when using InnoDB, for a static or mostly static site such as a WordPress re-enabling it and configuring it properly will in many cases make a day and night difference if you are using a spindle drive or simply have a query heavy site.

There are many other changes that could be made. For example, more interactive sites such as eCommerce sites may wish to use some form of memory caching such as APCU, Redis or Memcache. Image heavy sites may wish to more heavily rely on an external CDN like Cloudfront to offload images to. We can also cluster systems like PHP-FPM so Nginx or multiple Nginx servers can pass the request to the most available server.